“The most expensive mistake in enterprise AI is not choosing the wrong model, it is misunderstanding which game you are playing.” What is our AI strategy? The pressure to adopt Artificial Intelligence is immense, and the penalties for inaction feel existential. Yet for many executives, stepping into the AI landscape feels less like strategic planning […]

Why Your AI Agents Are One Update Away from Breaking

An organisation that cannot remember what it decided, or why, is condemned to decide the same things over and over again, each time believing it is the first.

Where Emotions Lie Inside a Neural Network: A CT Scan of LLM Hidden States

Large language models respond to emotionally charged inputs with contextually appropriate outputs, but the mechanism by which they represent, propagate, and modulate emotional tone through their internal layers remains poorly understood. Do emotions “live” in specific layers? Is the signal carried by the attention mechanism, the MLP, or the residual stream itself? And when a model is instructed to be a “helpful assistant,” does its internal representation remain emotionally neutral, or does it mirror the user’s emotional state?

The Memory That Stays. Part 2

The tool is never the bottleneck. The bottleneck is everything the tool cannot see, cannot access, and does not know it should ask about. The Illusion of the Ready-Made Agent In the past months Anthropic’s Cowork graduated from research preview to general availability across all paid plans, bringing desktop-native agentic capabilities to marketing, finance, legal, […]

The Memory That Stays. Part 1

An organisation that cannot remember what it decided, or why, is condemned to decide the same things over and over again, each time believing it is the first.

Small LLM Performance Benchmark – Research Report

This report presents the results of a systematic evaluation of 22 quantized open-source language models across description generation tasks, measuring quality, JSON reliability, and inference efficiency.

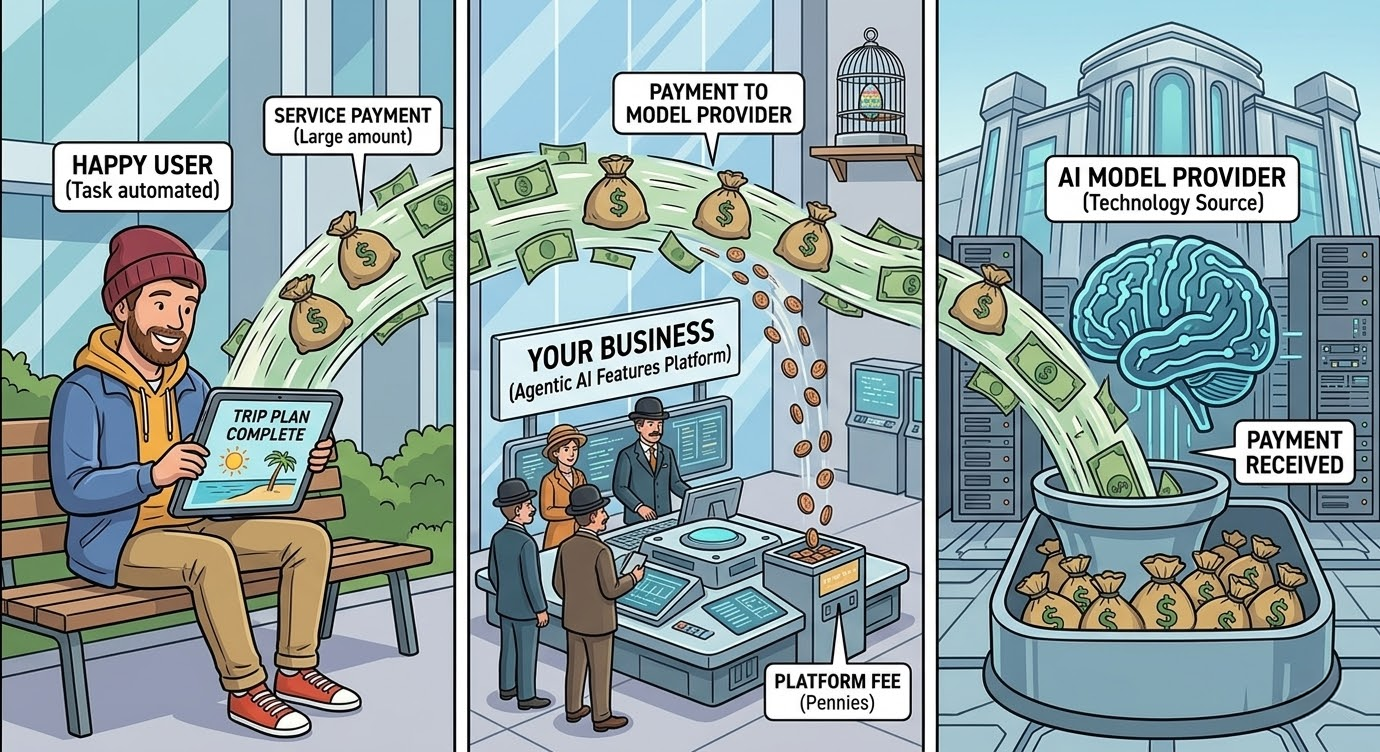

The Planning Tax: Why Your AI Agent Feature Might Be Your Worst Investment

Your best feature may be destroying your margins, and your engineering team has no idea. This article isn’t about AI as a productivity tool. It’s about AI as a cost structure, embedded in your product, triggered by your users, and scaling with your revenue. The AI agents embedded in your product are generating a […]

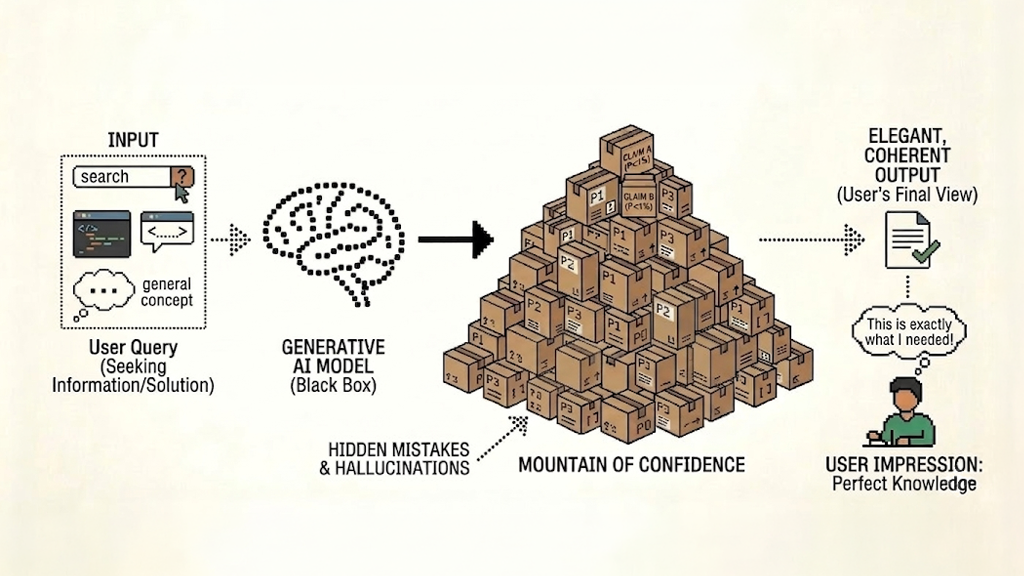

The Illusion of Knowing: How Generative AI Buries Mistakes in a Mountain of Confidence

“The information you never see is, by definition, the information you cannot evaluate. And when the machine decides what is relevant on your behalf, the missing piece becomes the needle in a haystack you did not even know existed.” The Seductive Logic of the Machine “Something quiet and consequential is happening to […]

The AI Efficiency Trap: Why Architecture Matters More Than Token Windows

The marketing narrative for 2025/2026 is seductive: models offer context windows of 1 million to 10 million tokens. The implication is that you can simply “paste your entire codebase” into the prompt and the AI will reason perfectly across it. The Reality This is operationally false and financially dangerous. Current research identifies a phenomenon known […]

The Shift to Business-to-Agent (B2A) Commerce: Why Your Product Descriptions Are Now Your Most Critical Sales Asset

Research Report, March 2026 | AscentCore This research was conducted by AscentCore using a controlled simulation framework that tests AI agent purchasing behavior across competitive marketplaces. For methodology details, replication data, or to run this experiment on your own product category, contact the authors. 1. The Problem: Your Next Customer Isn’t Human For decades, […]