This report presents the results of a systematic evaluation of 22 quantized open-source language models across description generation tasks, measuring quality, JSON reliability, and inference efficiency.

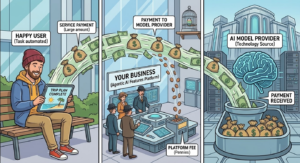

When you run thousands of small, focused tasks through a cloud LLM API, classifying a document, tagging a record, generating a description, extracting a field, the per-call fee is negligible. Multiply it by millions of daily operations across a production pipeline, and the bill becomes a boardroom conversation.

For real-time, customer-facing interactions, that premium is often justified. But most automated backend tasks don’t need real-time response. They need accuracy, reliability, and throughput at a cost that doesn’t erode your margin.

Smaller, locally deployed language models are a proven answer to this problem. Models in the 1B–14B parameter range, running on-premises or on modest cloud GPU instances under GGUF quantization, can handle the long tail of focused automation tasks, description generation, classification, summarization, structured data extraction, at a fraction of the API cost and with zero per-call billing.

The engineering barrier to deploying them has dropped significantly. The question is no longer can you run them, but which one should you run.

AscentCore evaluated 22 quantized configurations of 11 open-source models, from 1B to 14B parameters, under Q4 and Q8 quantization, across six description generation task variants.

We measured quality (ROUGE, factual consistency), structured output reliability (JSON parse rate, schema compliance), and inference efficiency (latency, tokens per second).

The results cut through the noise: Mistral 7B leads on raw quality, Qwen 2.5 7B delivers the best quality-to-speed ratio, and Llama 3.1 8B is the safest choice when your pipeline demands strict JSON schema compliance. For edge or mobile workloads, Qwen 2.5 1.5B holds up remarkably well at 167 tokens per second.

22

6

1,012

0.509

95.7%

226

Experimental Setup

We evaluated 11 base models spanning two parameter tiers, each tested under Q4_K_M and Q8_0 GGUF quantization (All local models were served via Ollama for standardized inference.)

Tier A (sub-3B, edge/mobile candidates):

- Gemma 3 1B

- Llama 3.2 1B

- Llama 3.2 3B

- Qwen 2.5 1.5B

- Phi-3.5 Mini (3.8B)

- SmolLM2 1.7B

Tier B (7–14B, server-light/desktop candidates)

- Gemma 3 4B

- Gemma 3 12B

- Llama 3.1 8B

- Mistral 7B v0.3

- Qwen 2.5 7B

- Phi-3 Medium (14B)

Task Definition

Three description complexity levels were defined:

- Brief (1–2 sentences, max 50 words) targeting tooltips and search snippets;

- Mid-Level (3–5 sentences, 75–150 words) targeting product pages and executive summaries

- Strong (2–4 paragraphs, 200–400 words) targeting reports and in-depth documentation.

Each level was tested with both a plain text output prompt and a structured JSON output prompt requiring specific schema adherence, yielding six task variants total.

Metrics

Quality was measured via ROUGE-1, ROUGE-2, and ROUGE-L (lexical overlap with reference), factual consistency (NLI-based alignment scoring), and length compliance.

For JSON tasks, we additionally measured JSON parse rate (syntactic validity), schema compliance rate (structural correctness via jsonschema validation), field completeness, and extraneous output rate. Efficiency was captured through time to first token (TTFT), total latency, and tokens per second (TPS).

Full Results Table

| Model | Tier | ROUGE-1 | ROUGE-2 | ROUGE-L | Factual | TPS | Latency (ms) | TTFT (ms) | JSON Parse % | Schema % | Len Comply % | Repetition |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| gemma3:1b-q4_K_M | A | 0.545 | 0.266 | 0.388 | 0.587 | 163.5 | 1,968 | 234 | 82.6% | 4.3% | 78.3% | 0.003 |

| gemma3:1b-q8_0 | A | 0.557 | 0.276 | 0.396 | 0.642 | 146.7 | 2,143 | 255 | 95.7% | 4.3% | 73.9% | 0.003 |

| llama3.2:1b-q4_K_M | A | 0.537 | 0.278 | 0.390 | 0.584 | 226.5 | 1,088 | 171 | 65.2% | 30.4% | 82.6% | 0.008 |

| llama3.2:1b-q8_0 | A | 0.521 | 0.270 | 0.378 | 0.699 | 160.2 | 1,280 | 190 | 56.5% | 30.4% | 95.7% | 0.005 |

| qwen2.5:1.5b-q4_K_M | A | 0.561 | 0.271 | 0.398 | 0.593 | 167.5 | 1,414 | 207 | 95.7% | 47.8% | 87.0% | 0.006 |

| qwen2.5:1.5b-q8_0 | A | 0.583 | 0.293 | 0.421 | 0.716 | 121.9 | 1,803 | 205 | 95.7% | 56.5% | 95.7% | 0.006 |

| smollm2:1.7b-q4_K_M | A | 0.561 | 0.309 | 0.408 | 0.728 | 157.3 | 1,480 | 192 | 26.1% | 4.3% | 87.0% | 0.003 |

| smollm2:1.7b-q8_0 | A | 0.552 | 0.299 | 0.407 | 0.682 | 109.6 | 2,059 | 204 | 56.5% | 26.1% | 82.6% | 0.004 |

| llama3.2:3b-q4_K_M | A | 0.584 | 0.316 | 0.436 | 0.674 | 98.7 | 2,031 | 316 | 47.8% | 34.8% | 100.0% | 0.004 |

| llama3.2:3b-q8_0 | A | 0.615 | 0.345 | 0.464 | 0.650 | 65.2 | 2,885 | 345 | 56.5% | 52.2% | 100.0% | 0.002 |

| phi3.5:3.8b-q4_K_M | A | 0.536 | 0.226 | 0.353 | 0.749 | 69.3 | 5,824 | 516 | 87.0% | 65.2% | 30.4% | 0.052 |

| phi3.5:3.8b-q8_0 | A | 0.546 | 0.233 | 0.364 | 0.664 | 50.7 | 6,580 | 526 | 78.3% | 56.5% | 47.8% | 0.003 |

| gemma3:4b-q4_K_M | B | 0.578 | 0.295 | 0.427 | 0.697 | 71.6 | 3,927 | 452 | 100.0% | 87.0% | 78.3% | 0.002 |

| gemma3:4b-q8_0 | B | 0.574 | 0.281 | 0.410 | 0.715 | 49.1 | 5,552 | 465 | 100.0% | 69.6% | 78.3% | 0.001 |

| qwen2.5:7b-q4_K_M | B | 0.612 | 0.336 | 0.462 | 0.705 | 48.1 | 4,270 | 606 | 95.7% | 73.9% | 95.7% | 0.003 |

| qwen2.5:7b-q8_0 | B | 0.627 | 0.348 | 0.474 | 0.734 | 31.0 | 5,977 | 679 | 95.7% | 65.2% | 95.7% | 0.001 |

| mistral:7b-q4_K_M | B | 0.650 | 0.375 | 0.496 | 0.762 | 49.0 | 5,900 | 657 | 95.7% | 39.1% | 82.6% | 0.009 |

| mistral:7b-q8_0 | B | 0.653 | 0.377 | 0.509 | 0.741 | 31.2 | 8,760 | 731 | 100.0% | 47.8% | 91.3% | 0.015 |

| llama3.1:8b-q4_K_M | B | 0.601 | 0.332 | 0.447 | 0.688 | 46.6 | 4,506 | 641 | 91.3% | 91.3% | 100.0% | 0.006 |

| llama3.1:8b-q8_0 | B | 0.609 | 0.342 | 0.454 | 0.662 | 29.3 | 6,788 | 724 | 100.0% | 95.7% | 100.0% | 0.005 |

| gemma3:12b-q4_K_M | B | 0.598 | 0.311 | 0.435 | 0.699 | 27.3 | 10,545 | 1,171 | 100.0% | 43.5% | 78.3% | 0.001 |

| phi3:14b-q4_K_M | B | 0.612 | 0.314 | 0.433 | 0.731 | 23.6 | 12,882 | 1,402 | 95.7% | 78.3% | 65.2% | 0.004 |

Visual Comparisons

ROUGE-L by Model (all tasks avg)

Factual Consistency by Model

Tokens per Second (log scale)

BERTScore by Task Variant — Mistral 7B vs Llama 3.1 8B

JSON Reliability

JSON output mode introduces additional failure modes beyond text quality. The gap between parse rate and schema compliance is critical: a model that can produce valid JSON may still fail to match the required field structure.

| Model | Tier | JSON Parse % | Schema Comply % | Field Completeness | Extraneous Output % | Key Accuracy |

|---|---|---|---|---|---|---|

| gemma3:1b-q4_K_M | A | 82.6% | 4.3% | 0.826 | 100.0% | 0.826 |

| gemma3:1b-q8_0 | A | 95.7% | 4.3% | 0.957 | 100.0% | 0.957 |

| llama3.2:1b-q4_K_M | A | 65.2% | 30.4% | 0.652 | 4.3% | 0.652 |

| llama3.2:1b-q8_0 | A | 56.5% | 30.4% | 0.565 | 0.0% | 0.565 |

| qwen2.5:1.5b-q4_K_M | A | 95.7% | 47.8% | 0.957 | 4.3% | 0.957 |

| qwen2.5:1.5b-q8_0 | A | 95.7% | 56.5% | 0.957 | 4.3% | 0.957 |

| smollm2:1.7b-q4_K_M | A | 26.1% | 4.3% | 0.217 | 0.0% | 0.261 |

| smollm2:1.7b-q8_0 | A | 56.5% | 26.1% | 0.533 | 0.0% | 0.538 |

| llama3.2:3b-q4_K_M | A | 47.8% | 34.8% | 0.478 | 0.0% | 0.478 |

| llama3.2:3b-q8_0 | A | 56.5% | 52.2% | 0.565 | 0.0% | 0.565 |

| phi3.5:3.8b-q4_K_M | A | 87.0% | 65.2% | 0.870 | 65.2% | 0.870 |

| phi3.5:3.8b-q8_0 | A | 78.3% | 56.5% | 0.783 | 30.4% | 0.783 |

| gemma3:4b-q4_K_M | B | 100.0% | 87.0% | 1.000 | 100.0% | 1.000 |

| gemma3:4b-q8_0 | B | 100.0% | 69.6% | 1.000 | 100.0% | 1.000 |

| qwen2.5:7b-q4_K_M | B | 95.7% | 73.9% | 0.957 | 0.0% | 0.957 |

| qwen2.5:7b-q8_0 | B | 95.7% | 65.2% | 0.957 | 0.0% | 0.957 |

| mistral:7b-q4_K_M | B | 95.7% | 39.1% | 0.957 | 0.0% | 0.957 |

| mistral:7b-q8_0 | B | 100.0% | 47.8% | 1.000 | 0.0% | 1.000 |

| llama3.1:8b-q4_K_M | B | 91.3% | 91.3% | 0.913 | 0.0% | 0.913 |

| llama3.1:8b-q8_0 | B | 100.0% | 95.7% | 1.000 | 0.0% | 1.000 |

| gemma3:12b-q4_K_M | B | 100.0% | 43.5% | 1.000 | 100.0% | 1.000 |

| phi3:14b-q4_K_M | B | 95.7% | 78.3% | 0.957 | 34.8% | 0.957 |

JSON Parse Rate vs Schema Compliance — All Models

Inference Efficiency

Latency and throughput matter as much as quality for production deployments. The efficiency-quality frontier shows the real trade-offs between tiers.

| Model | Tier | Tokens / sec | Avg Latency (ms) | Avg TTFT (ms) | ROUGE-L | Efficiency Score* |

|---|---|---|---|---|---|---|

| llama3.2:1b-q4_K_M | A | 226.5 | 1,088 | 171 | 0.390 | 88.3 |

| gemma3:1b-q4_K_M | A | 163.5 | 1,968 | 234 | 0.388 | 63.5 |

| smollm2:1.7b-q4_K_M | A | 157.3 | 1,480 | 192 | 0.408 | 64.2 |

| qwen2.5:1.5b-q4_K_M | A | 167.5 | 1,414 | 207 | 0.398 | 66.7 |

| llama3.2:3b-q4_K_M | A | 98.7 | 2,031 | 316 | 0.436 | 43.1 |

| gemma3:4b-q4_K_M | B | 71.6 | 3,927 | 452 | 0.427 | 30.6 |

| qwen2.5:7b-q4_K_M | B | 48.1 | 4,270 | 606 | 0.462 | 22.2 |

| mistral:7b-q4_K_M | B | 49.0 | 5,900 | 657 | 0.496 | 24.3 |

| llama3.1:8b-q4_K_M | B | 46.6 | 4,506 | 641 | 0.447 | 20.9 |

| mistral:7b-q8_0 | B | 31.2 | 8,760 | 731 | 0.509 | 15.9 |

| llama3.1:8b-q8_0 | B | 29.3 | 6,788 | 724 | 0.454 | 13.3 |

| gemma3:12b-q4_K_M | B | 27.3 | 10,545 | 1,171 | 0.435 | 11.9 |

| phi3:14b-q4_K_M | B | 23.6 | 12,882 | 1,402 | 0.433 | 10.2 |

Key Findings

Both Q4_K_M (ROUGE-L 0.496) and Q8_0 (0.509) variants top all other models on lexical fidelity and factual consistency (0.762 — highest in the benchmark). Especially strong on mid and strong description tasks.

Q8_0 achieves 100% parse rate and 95.7% schema compliance — the highest of any model — while producing zero extraneous output. Q4_K_M is a close second at 91.3% schema compliance.

At Q8_0, Qwen 2.5 1.5B delivers ROUGE-L 0.421 and 95.7% JSON parse rate — competitive with some 7B models — while running at 121.9 TPS. The best choice when memory is the primary constraint.

Q8_0 vs Q4_K_M ROUGE-L delta ranges from +0.013 (Mistral 7B) down to near-zero (Gemma 3 4B). For speed-sensitive applications, Q4_K_M incurs minimal quality loss while delivering 40–60% higher TPS.

SmolLM2 1.7B Q4_K_M achieves only 26.1% JSON parse rate and 4.3% schema compliance — unusable for structured output. Q8_0 improves to 56.5%/26.1% but remains unreliable. Avoid for JSON pipelines.

Despite reasonable text quality (ROUGE-L 0.436–0.464), Llama 3.2 3B achieves only 47.8–56.5% JSON parse rate — a notable regression vs Llama 3.1 8B. The 3B scale appears insufficient for reliable structured output.

Phi-3.5 3.8B Q4_K_M shows a repetition rate of 0.052 — 5–50× higher than all other models — and the worst length compliance at 30.4%. Despite high factual consistency, it is not reliable for production text generation.

Gemma 3 4B achieves 100% JSON parse rate in both quants, though schema compliance drops to 87% (Q4_K_M). Notable: it adds extraneous commentary around JSON in 100% of runs — requires output stripping in production.

Gemma 3 12B and Phi-3 14B offer ROUGE-L scores (0.433–0.435) below Llama 3.1 8B (0.454) while running at under 28 TPS with 10–13 second average latency. No quality advantage over 7–8B models was found.

No model refused benign synthetic inputs. Repetition rates are low across the board (<0.01) except Phi-3.5 Mini. Language consistency was 100% for all models — no unexpected code-switching observed.

Recommendations

| Use Case | Recommended Model | Reason |

|---|---|---|

| Edge / mobile, text output | qwen2.5:1.5b-q8_0 | Best quality-per-parameter in sub-3B range. 121 TPS, ROUGE-L 0.421, low repetition. |

| Edge / mobile, JSON output | qwen2.5:1.5b-q8_0 | 95.7% parse rate at only 1.5B params. Significantly more reliable than Llama 3.2 3B for structured tasks. |

| Max throughput, acceptable quality | llama3.2:1b-q4_K_M | 226 TPS — fastest in the benchmark. Use for high-volume brief description tasks where latency is critical. |

| Server-light, text quality priority | mistral:7b-q4_K_M | Top ROUGE and factual scores at ~49 TPS. Best balance of quality and speed in Tier B for text tasks. |

| Server-light, JSON reliability priority | llama3.1:8b-q4_K_M | 91.3% schema compliance, zero extraneous output, 100% length compliance. The most reliable structured-output model. |

| Best overall quality (unconstrained) | mistral:7b-q8_0 | Highest ROUGE-L (0.509) and factual consistency (0.741) of all models. Cost: ~31 TPS and 8.7s avg latency. |

| Avoid for JSON pipelines | smollm2:1.7b, llama3.2:3b | Both fail to reliably produce valid JSON. Deploy for text-only tasks only. |

| Avoid for any production use | phi3.5:3.8b-q4_K_M | Highest repetition rate (0.052), worst length compliance (30.4%), high extraneous output (65%). Not production-ready. |